Ask a CISO what authenticates a user logging into a critical system, and the answer is specific: IAM, MFA, conditional access, session controls. Ask the same CISO what verifies the person calling the help desk to reset those credentials, and the answer is usually an agent making a judgment call.

That is the gap attackers are now operating in at scale.

Voice phishing is no longer a fringe vector

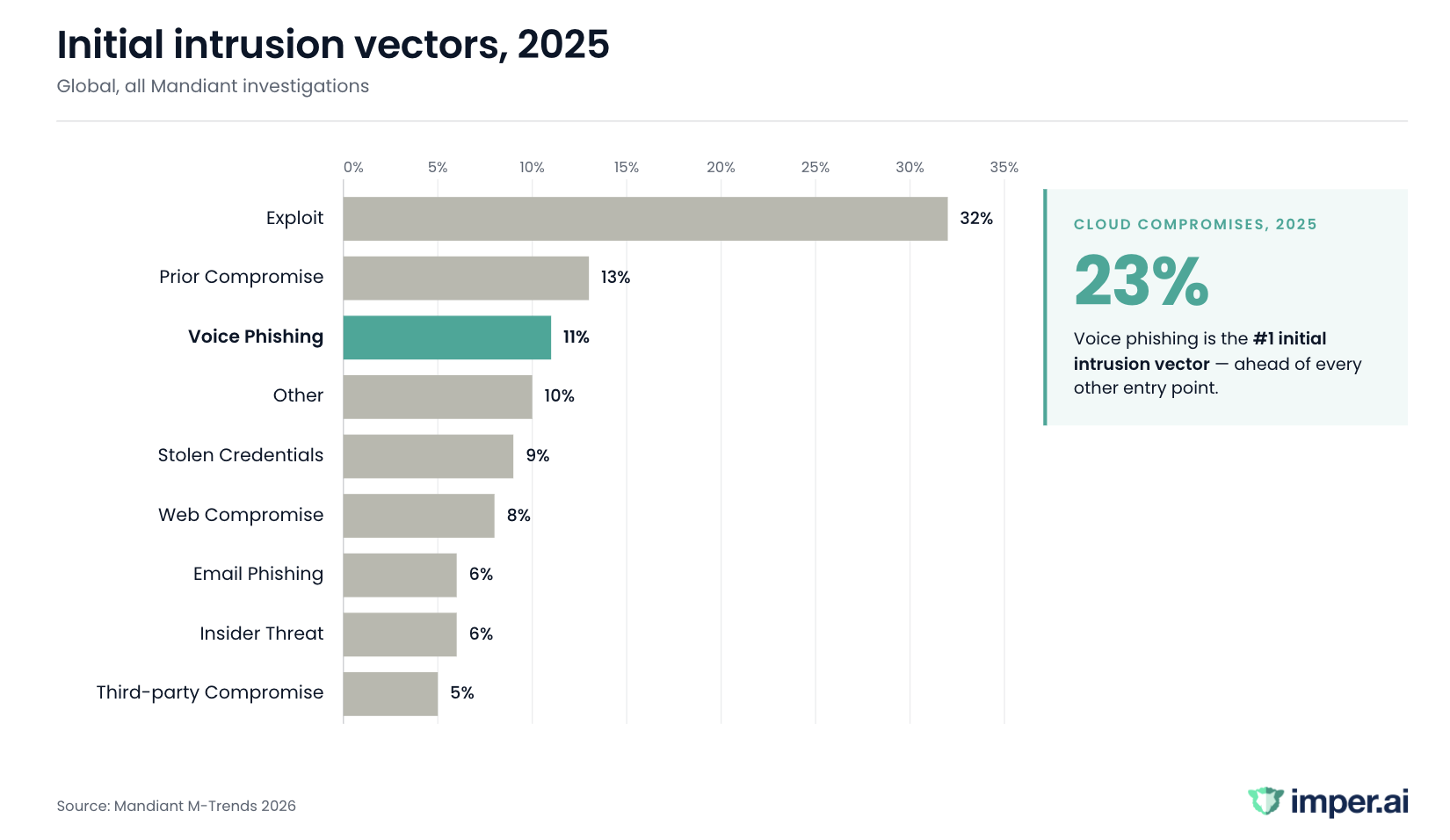

Mandiant’s M-Trends 2026 report flags voice phishing as the top initial intrusion vector for cloud compromises in 2025. Globally, across on-prem and cloud combined, it has risen to the #2 spot, as email phishing has declined in parallel.

Mandiant is explicit about why this matters: interactive social engineering is “significantly more resilient against automated technical controls” than email-based phishing. A live human steering a conversation in real time cannot be filtered at the gateway, quarantined by an email security product, or caught by a URL sandbox.

The attacker is past every technical control the moment an agent picks up the phone.

Who is running these campaigns

Two threat clusters account for most of what’s being reported. UNC3944/Scattered Spider targets help desk staff by impersonating employees to request password resets and MFA changes. UNC6040 ran voice phishing campaigns across the first half of 2025 that compromised dozens of Salesforce environments by calling employees and convincing them to authorize attacker-controlled applications.

No malware. No zero-days. A phone and a pretext.

Why MFA hardening created this problem

MFA is not failing. MFA worked. That is what created this shift.

As phishing-resistant MFA, number matching, and conditional access became standard in enterprise IAM, the login stopped being the weak point. Attackers did the rational thing: they stopped attacking the login. They started attacking the workflows where MFA cannot be present — account recovery, credential resets, new-device enrollment, onboarding.

In each of those workflows, identity is verified by a human conversation under time pressure. The controls that secure the session are structurally absent from the moment the session is established.

The downstream consequence is account takeover (ATO). Most ATO prevention tooling inspects login-time signals — credential stuffing, session anomalies, device posture at authentication. That tooling does not see help desk vishing, because the attacker is not logging in. The attacker is calling the recovery workflow and asking for the credentials to be reset.

Why training doesn’t close the gap

Security awareness training is a useful control, though it is not the right control for this attack.

Help desk agents are measured on resolution speed, first-contact fix rates, and customer satisfaction. They are trained to be responsive. The attacker’s entire advantage is that they sound like a cooperative employee making a reasonable request through the correct channel. The request itself is rarely suspicious.

What makes it effective is that the caller doesn’t need to bypass the verification script — they need to pass it, and the script is designed to let reasonable-sounding people through.

Gartner’s 2026 Predicts research puts it more bluntly: teaching humans to defend against every possible attack pattern has “proven to be an exercise in frustration and uselessness.” As long as a human judgment call is the verification layer, the vector remains open.

Stricter scripts don’t fix this either. Organizations that tighten their verification process discover that attackers study the script and pass it. The caller IDs the employee correctly, knows the recent password reset history, has the right manager name, and can answer the knowledge-based authentication questions because that information is in breach corpora or on LinkedIn.

The gap isn’t training discipline. The gap is that humans verifying humans in real time, without supporting infrastructure signal, is a control that does not scale against a motivated adversary.

What actually works: verifying the environment, not the script

The durable detection signal for this attack class isn’t what the caller says — it’s where the caller is operating from.

A legitimate employee requesting a password reset is on a known endpoint, on a familiar network, in an expected location, with consistent behavioral patterns across the session. An attacker impersonating that employee may have the right story, but they cannot simultaneously replicate the endpoint, network, location, and behavior. Each of those signals is controllable in isolation. Controlling all of them at once, across a live session, is where impersonation breaks down.

This is the layer imper.ai’s Impersonation Detection Engine operates in. It analyzes three signal layers simultaneously — network and location, endpoint, and behavior — to detect attacker-controlled environments before the help desk agent touches the ticket. For help desk vishing specifically, that detection runs alongside AI-Driven Contextual Verification, which confirms the caller is the actual employee through dynamic, role-based questions grounded in enterprise context — not static security questions sourced from breached data.

No documents. No biometrics. No manual review. Environment authenticity verified first, person verified second, both before the recovery workflow proceeds.

The audit trajectory

Workforce identity verification, sometimes surfaced in search as Know Your Employee (KYE), is now in Gartner’s formal research. Two reports on this control were published in Q1 2026. It is moving toward being an expected, auditable layer on account recovery and hiring workflows.

The question worth asking internally, before the next audit cycle asks it externally: Your IAM stack authenticates the login. What verifies the person requesting the reset?

If the answer is “an agent, using a script,” the gap is already visible. It’s just not yet measured.

Book a 15-minute walkthrough of imper.ai for Help Desk