The IT help desk sits at a unique intersection of trust, urgency, and access. It’s one of the few places in an organization where people regularly ask for sensitive actions like password resets, MFA device changes, and temporary access, and expect fast resolution.

That combination makes it an attractive target. Attackers don’t need software vulnerabilities or malware. They need to sound like someone who belongs. The right role, the right terminology, a believable problem. That’s often enough to move a request forward.

The environment has shifted in ways that make this easier. Remote work, distributed teams, and always-on collaboration mean more help desk interactions happen over voice, chat, and tickets, often without in-person verification. For attackers, that’s opportunity. For help desk teams, it’s pressure. For organizations, it means the help desk has quietly become one of the most critical front lines in defending against impersonation.

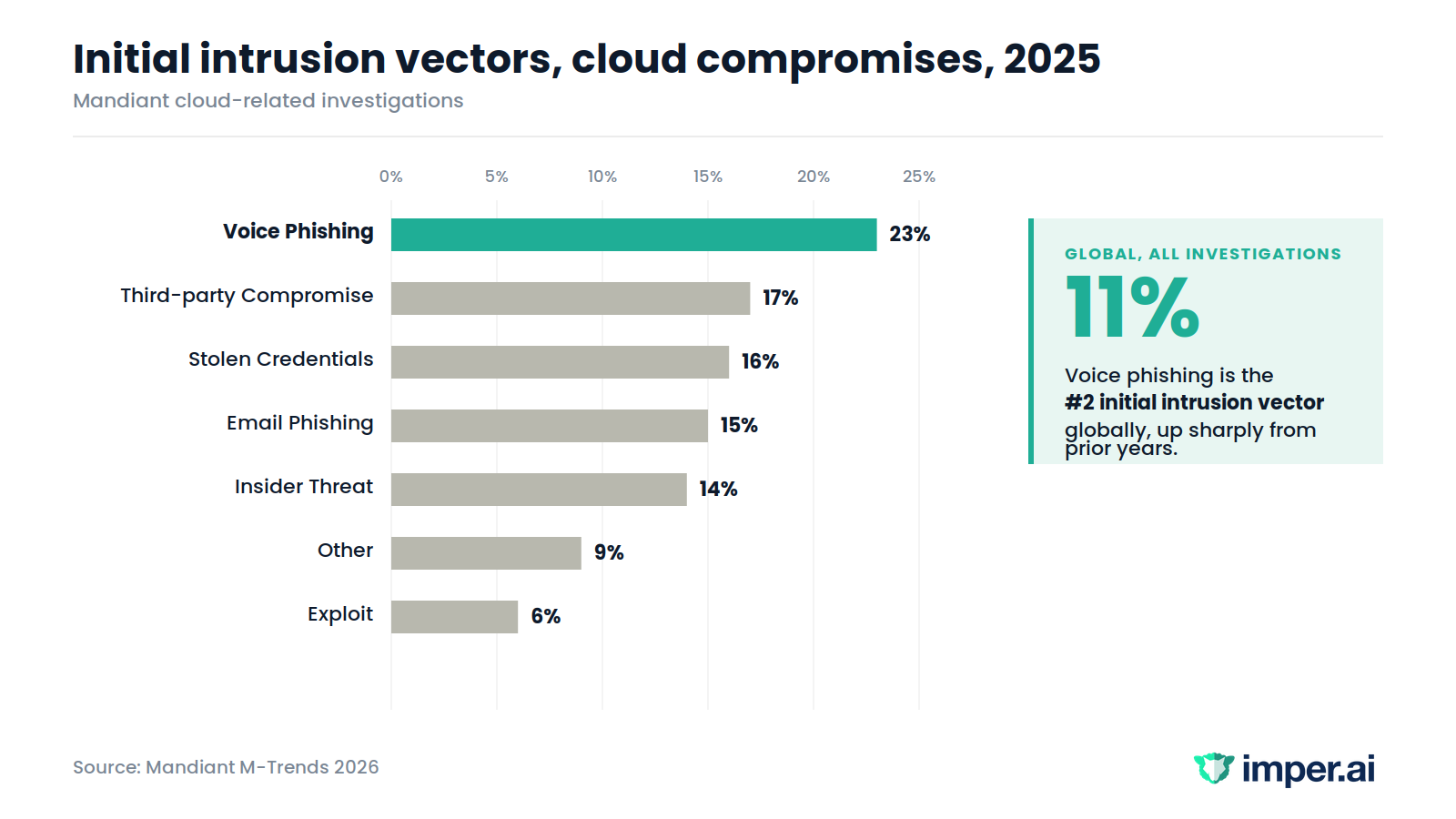

The data backs this up. Voice phishing has risen to the second-most commonly observed initial infection vector globally in 2025, at 11% of investigations, while email phishing dropped from 14% in 2024 to 6% in 2025. In cloud compromises specifically, voice phishing was the number one initial infection vector at 23%. Mandiant explicitly notes that interactive social engineering is significantly more resilient against automated technical controls than email phishing and requires different detection strategies.

In other words: the controls most organizations have invested in don’t see this attack.

The common attack patterns

Help desk attacks work because they blend into everyday workflows. They look like routine requests agents are trained to resolve quickly, not attacks. A few patterns dominate.

Employee impersonation: An attacker calls or messages pretending to be an employee who is locked out, traveling, or under time pressure. The request is simple and reasonable: a password reset, an MFA change, temporary access to a system they need right now. UNC3944, the financially motivated cluster overlapping with public reporting on Scattered Spider, has targeted help desk staff specifically by impersonating employees requesting password resets and MFA changes. The tactic relies on plausibility. The language is internal. The problem fits. The request sounds identical to dozens of legitimate ones agents handle every day.

IT impersonation: Attackers flip the roles and pose as internal IT or security staff, contacting employees directly to “fix an issue” or address suspicious activity. A common variation: trigger MFA fatigue, then follow up with a support call to help the user resolve it. The goal is cooperation, remote-access tool installation, or credential capture, all under the appearance of legitimate IT assistance.

Multi-channel stacking: Modern attacks rarely rely on a single channel. A Teams message before a phone call. An email ticket followed by a voice request referencing it. UNC6040 used voice phishing across the first half of 2025 to convince targets to provide credentials and authorize an attacker-controlled version of a legitimate SaaS application, with extortion under the ShinyHunters brand following months later. Each channel adds context. By the time the request reaches the agent, it already feels embedded in an existing workflow.

Urgency and escalation. Across all of these, urgency is constant. “I can’t get this job done.” “This is blocking something important.” “My manager needs this fixed now.” These cues are designed to reduce friction and shorten the decision window.

None of this depends on deception that looks unusual. These attacks succeed because they mirror how legitimate help desk interactions normally unfold.

The deepfake distraction

As awareness of help desk impersonation grows, many organizations look first at tools that analyze content itself: voice, video, text. Synthetic media detection has a real role in modern security stacks, particularly for brand protection and the kind of high-stakes public deception that crosses into national security territory, like a fabricated video of a head of state, a manipulated earnings call, or a forged broadcast.

But for help desk vishing, content analysis is the wrong layer.

Most successful help desk impersonation doesn’t use synthetic media at all. The voice is real and the request is reasonable. Attackers focus on timing, context, and familiarity, calling during busy periods, referencing common tools, describing problems that fit everyday volume. When the request aligns with expectations, it moves forward.

Even when voice cloning is used, it narrows the margin for hesitation rather than creating the attack. The attack already worked because it sounded plausible.

Content defenses also exist in a constant adaptation cycle. As generative AI evolves, detection has to catch up. Useful for some workflows. Insufficient for the help desk, where the question is not whether the voice is real, but whether the interaction itself behaves as expected.

Final thoughts

The IT help desk is a critical trust point. It’s where problems get solved, access gets restored, and people work fast. That’s exactly why attackers target it.

Awareness training, content filters, and scripted verification all help. None of them cover what happens inside a live interaction when the voice is real and the request sounds reasonable. That’s the gap imper.ai is built to close.

Protecting the help desk means protecting the entire workforce.

Book a 15-minute walkthrough of imper.ai for Help Desk