When security leaders map their attack surface, the hiring pipeline rarely makes the list. It should.

Remote hiring has changed who organizations let inside, and how. Trust decisions now happen across screens, video calls, and digital artifacts. Attackers have noticed. The result is a category of threat that existing controls were never built to catch: hiring fraud.

Resume content is one surface, and AI has made it trivially easy to manipulate. But the infrastructure underneath is what gives the operator away, and that is the layer most hiring controls do not see.

The threat is documented, active, and primarily nation-state

Since at least 2022, the Democratic People’s Republic of Korea has run a structured program placing IT workers into Western technical roles to generate hard currency, with revenue assessed by the US Department of Justice to support sanctions evasion and weapons programs. Individual operators earn up to $300,000 annually, with the regime retaining up to 90 percent of those earnings (Unit 42 Wagemole research).

The activity is tracked across vendors under multiple aliases: Wagemole (Unit 42), UNC5267 (Google Mandiant), Nickel Tapestry and Jasper Sleet (Microsoft), and is a component of the broader Contagious Interview cluster (GitLab). According to Mandiant, nearly every Fortune 500 CISO interviewed has acknowledged hiring at least one DPRK IT worker.

The TTP set has been consistent across the entire period. So has the gap that makes it work.

Why traditional hiring controls do not see this

Background checks validate a name against a record. Document verification confirms a face matches an ID at one moment in time. Skills assessments test knowledge a remote operator can provide in real time. None of these controls instrument the device or network layer during the live session.

That is where the tradecraft lives. And that is what makes the program durable.

What the attack actually looks like

In Q1 2026, imper.ai’s Impersonation Detection Engine processed pre-employment verification for 600 candidates at a global enterprise. Four were running confirmed DPRK IT worker tradecraft. Each exhibited overlapping infrastructure-layer indicators mapped to UNC5267, MITRE ATT&CK T1090, T1090.003, T1219, and T1585.

The signals were consistent across all four:

Astrill VPN. Documented across Unit 42 and Mandiant research as DPRK IT worker infrastructure. One candidate ran Astrill at the OS level alongside CyberGhost and Urban VPN as browser extensions: three providers stacked. No legitimate candidate use case matches this profile.

Remote-controlled device tooling during interviews. The person on camera is not always the person operating the session. A coached operator can present credibly while a third party drives the technical portion in real time.

Multi-hop proxy routing with latency mismatch. Candidates configured US timezones while exhibiting network latency consistent with East Asian origin. Timezone is trivially manipulated. Latency is a physical property and cannot be fully spoofed. The delta is non-spoofable.

VoIP numbers paired with low-tenure email accounts. Programmatically provisioned phone numbers and newly registered Gmail addresses. Individually inconclusive. Together, a purpose-built synthetic identity.

No background check, skills assessment, or document-based identity verification would have surfaced these indicators.

Onboarding is where continuity breaks

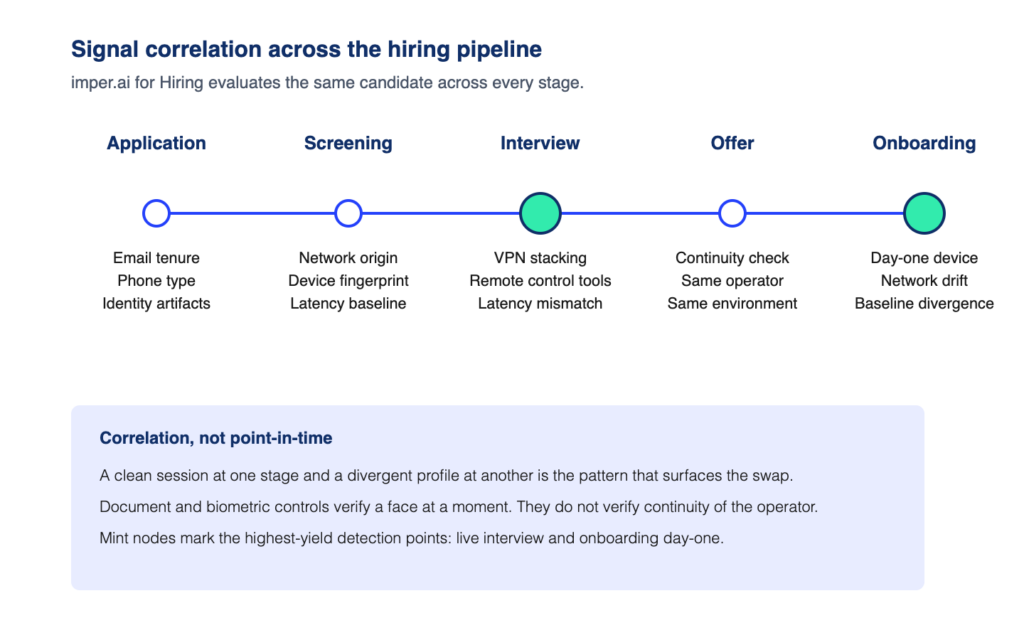

The interview is one signal capture point. Onboarding is another. And the gap between them is where a different category of attack plays out.

A candidate can pass interviews legitimately and a different operator can show up on day one with the issued credentials. The infrastructure signals at onboarding will not match the baseline imper.ai built during the interview process: different device fingerprint, different network origin, different latency profile, different identity artifacts. The person who signed the offer is not the person logging in.

This is why signal correlation across the pipeline matters more than verification at any single moment. imper.ai for Hiring evaluates the same candidate across application, screening, interview, offer, and onboarding. A clean session at one stage and a divergent profile at another is the pattern that surfaces the swap. Document-based and biometric controls cannot see this. They verify a face at a point in time. They do not verify continuity of the operator.

Hiring fraud is a security problem, not an HR problem

The compliance exposure compounds the security one. Paying a DPRK IT worker’s salary is an OFAC sanctions violation regardless of whether the employer knew. The same unmonitored gap creates two distinct categories of risk: insider access through a trusted hiring relationship, and direct sanctions liability.

Hiring pipeline security has historically meant background checks and document verification. Neither operates at the layer where this tradecraft is visible.

What detection at this layer requires

imper.ai for Hiring is the Impersonation Detection Engine applied across the hiring pipeline. It correlates device, network, and identity signals across multiple touchpoints in the process: application, screening, interviews, offer, onboarding. A candidate who presents clean signals at screening but shows Astrill VPN and a remote-controlled device at the technical interview is flagged on the pattern, not on a single moment in time.

imper.ai does not analyze what candidates look like. It analyzes the environment they operate from. No documents. No biometrics. No manual review. The recruiter process is unchanged. Risk signals and a case history surface for security review.

When the user changes after day one

Hiring fraud and shadow workforce are the same operator problem at different stages.

A candidate who slips through hiring becomes a shadow workforce problem on day one. A legitimate hire who later outsources their work to a third party, shares credentials, or hands a session to a remote operator is a shadow workforce problem that hiring controls cannot retroactively solve. The shadow operator has real work knowledge from doing the actual job. They will pass any point-in-time identity check, including knowledge-based questioning.

imper.ai for Shadow Workforce extends the same Impersonation Detection Engine into continuous verification post-hire. Unlike hiring fraud detection, which correlates signals across discrete pipeline touchpoints, shadow workforce detection operates on continuous baseline drift. imper.ai builds a behavioral and infrastructure baseline at onboarding and monitors for drift: device fingerprint changes, location inconsistencies, network infrastructure changes, execution pattern drift. When anomalies accumulate, a step-up privileged access challenge fires at the moment of a high-risk action, before the action completes.

The same operator question, asked continuously instead of once.

Authentication proves login. Verification proves identity. For the workforce, verification has to operate at the layer where the impersonation is actually happening, and it has to keep operating after the hire is made.

Book a 15-minute walkthrough of imper.ai for Hiring